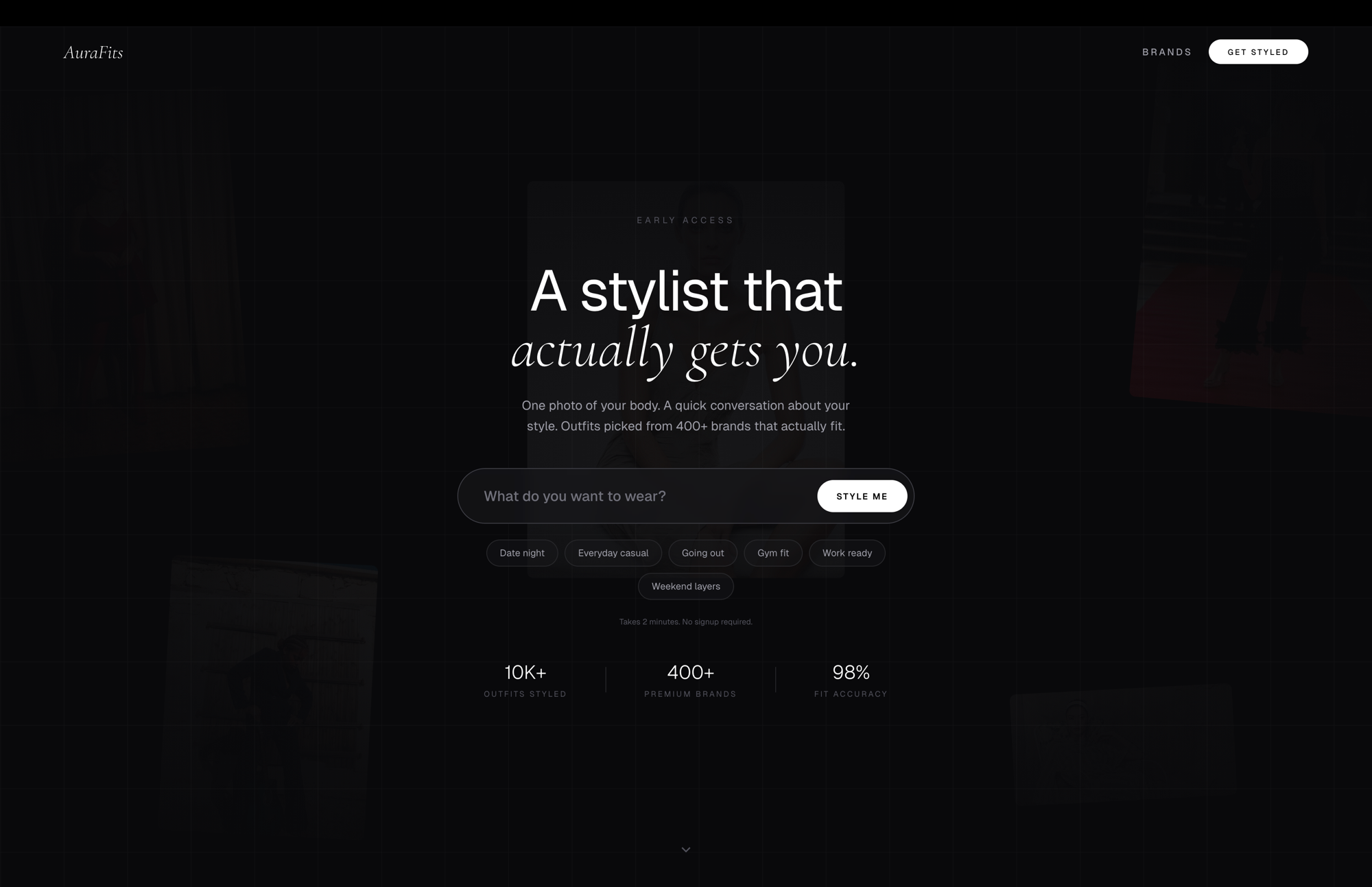

AuraFits: Multimodal AI Advisory Platform for Specialty Retail

Executive Summary

Specialty retail faces a persistent gap between online browsing and in-store expertise. Customers want personalized recommendations that account for body type, activity goals, and aesthetic taste, but human advisors are expensive, inconsistent, and limited by what they can remember across thousands of SKUs.

AuraFits closes that gap with a multimodal AI pipeline: a single photo and a short prompt produce ranked, inventory-backed gear recommendations drawn from 52 live Shopify storefronts. The system uses Google Gemini for vision analysis, adaptive question generation, and product ranking, with Upstash Vector providing semantic search across a continuously synced product catalog.

The Challenge

The Expertise Bottleneck

Specialty retail (performance running, athletic recovery, designer streetwear) depends on product knowledge that takes years to develop. A seasoned associate can look at a customer, ask a few questions, and recommend the right shoe from 200+ options. But that expertise is expensive, scarce, and does not scale.

The typical customer journey in specialty retail breaks down at three points:

- Discovery friction: Customers don’t know what they don’t know. Browsing filters (size, color, price) cannot capture “I’m training for a half marathon and my ankles pronate” or “I want a Rick Owens-adjacent look for under $400.”

- Expertise scarcity: A knowledgeable associate costs $45,000-$65,000/year in salary alone [1]. Most stores cannot staff experts across every product category during every shift.

- Inventory blindness: Even experienced associates struggle to recall which of 2,000+ SKUs are currently in stock, especially across multiple vendor lines.

Why Existing Solutions Fall Short

Existing retail AI tools approach the problem from one of two directions, and both leave gaps:

| Approach | How It Works | Limitation |

|---|---|---|

| Collaborative filtering | "Customers who bought X also bought Y" | No body awareness, no contextual understanding of goals |

| Quiz-based recommenders | Fixed decision tree with predetermined outcomes | Cannot adapt to novel requests; limited by author's foresight |

| Visual search | "Find products that look like this photo" | Matches aesthetics only; ignores fit, function, and body type |

| Chatbot product finders | Keyword matching against catalog metadata | No vision, no body analysis, brittle to natural language |

None of these combine vision (analyzing the customer’s body), conversation (understanding their goals and constraints), and live inventory (only recommending what’s actually in stock) in a single flow.

Multi-Vendor Complexity

The target deployment environment (multi-brand specialty retailers) introduces additional complexity:

- 52 independent Shopify storefronts, each with different data conventions

- Vendor names vary across stores (“Nike”, “NIKE”, “Nike Netherlands BV”, “Nike-Footwear”)

- Product categorization is inconsistent (one store’s “Running Shoes” is another’s “Athletic Footwear / Road”)

- Inventory changes daily; static catalogs go stale within hours

The Solution

System Architecture

The platform is built as a Next.js 16 application deployed on Vercel, with Google Gemini providing multimodal AI capabilities and Upstash Vector serving as the semantic product index.

flowchart TB

subgraph client [Client Browser]

A[Camera / Upload]

B[Movement Goal Input]

C[Adaptive Q&A UI]

D[Product Results View]

end

subgraph wizard [AI Wizard Pipeline]

direction TB

E["/api/wizard/analyze

Biometric Vision Analysis"]

F["/api/wizard/questions

Adaptive Question Generation"]

G["/api/wizard/recommend

Vector Search + LLM Ranking"]

H["/api/wizard/outfit-image

AI Outfit Visualization"]

end

subgraph ai [Google Gemini]

I["gemini-3-flash-preview

Vision + Reasoning"]

J["gemini-embedding-001

1,536-dim Embeddings"]

K["nano-banana-pro-preview

Image Generation"]

end

subgraph inventory [Inventory Layer]

L[52 Shopify Stores

Public JSON API]

M["/api/wizard/sync

Daily Cron 06:00 UTC"]

N[Upstash Vector DB

Embeddings + Metadata]

end

A -->|Base64 JPEG

max 1024px, q=0.7| E

B --> F

E -->|Biometric Profile| C

F -->|Dynamic Questions| C

C -->|Answers + Profile| G

G -->|Ranked Products| D

D -->|On Demand| H

E --> I

F --> I

G --> I

G --> J

G --> N

H --> K

L -->|products.json| M

M -->|Embed + Upsert| N

M --> J

The architecture separates three concerns:

- Client-side capture and interaction: Camera access, image downsampling, and a step-by-step wizard UI

- AI inference pipeline: Four sequential API calls, each targeting a specific Gemini model capability

- Inventory synchronization: A daily cron job that crawls 52 Shopify stores, embeds product descriptions, and maintains a vector index

The Wizard Flow

The customer experience follows six steps, each backed by a distinct technical operation:

flowchart LR

subgraph step1 [Step 1]

S1["Movement Goal

Free text + quick pills"]

end

subgraph step2 [Step 2]

S2["Body Scan

Camera capture + Gemini vision"]

end

subgraph step3 [Step 3]

S3["Scan Results

Biometric profile + color palette"]

end

subgraph step4 [Step 4]

S4["Email Gate

Lead capture"]

end

subgraph step5 [Step 5]

S5["Adaptive Questions

4-5 AI-generated questions"]

end

subgraph step6 [Step 6]

S6["Recommendations

Ten Picks or Two Fits"]

end

S1 --> S2 --> S3 --> S4 --> S5 --> S6

Step 1 triggers a debounced prefetch (800ms) of follow-up questions, so they are cached before the user finishes the biometric scan. All four quick-pill options are prefetched on mount.

Step 2 captures a single photo, downsamples it client-side to max 1024px at JPEG quality 0.7, and sends it to Gemini’s vision model. During the 10-15 second analysis, the UI displays an animated body-scan overlay and a product teaser carousel drawn from the vector DB.

Step 6 branches into two output modes, detected automatically from the user’s goal:

- Ten Picks: 10 products from the same category, each assigned a unique archetype (Comfort Pick, Performance Pick, Budget Pick, Style Pick, etc.)

- Two Fits: 2 complete coordinated outfits of 5-6 items each (shoes, bottoms, top, layer, accessory), with optional AI-generated outfit visualization

Technical Implementation

Biometric Vision Analysis

The first AI call sends the customer’s photo to gemini-3-flash-preview with a structured prompt requesting analysis across two dimensions:

Physical Profile:

- Body type classification (ectomorph / mesomorph / endomorph)

- Build estimate and posture assessment

- Joint alignment and mobility indicators

- Muscle distribution patterns

Aesthetic Profile:

- Skin tone and complexion

- Hair color and style

- Color season classification (Spring, Summer, Autumn, Winter)

- Style vibe assessment

- Personal color palette: 8-9 colors organized into 3 outfit combinations (Everyday, Bold, Tonal)

The response is parsed from <biometric_analysis> XML tags. If the photo does not contain a person, Gemini returns a <no_person> tag and the UI prompts for a new photo.

No computer vision libraries are used (no OpenCV, MediaPipe, or TensorFlow.js). All body estimation relies entirely on Gemini’s multimodal vision capabilities via natural language prompting.

Adaptive Question Generation

The second AI call receives the customer’s movement goal and generates 4-5 follow-up questions, each with 5-8 options. The prompt includes sophisticated scope detection:

| Detected Scope | Example Goal | Question Strategy |

|---|---|---|

| Full Fit | "Complete gym outfit for heavy lifting" | Questions span all garment categories; style cohesion matters |

| Specific Product | "Trail running shoes for rocky terrain" | Deep-dive on technical requirements for one category |

| Vague / Open | "Something nice for going out" | Clarifying questions to narrow intent before product-level detail |

The system also detects intent type (Performance, Fashion, or Hybrid) to weight questions appropriately. A Performance query gets questions about terrain, cushion preference, and pronation. A Fashion query gets questions about silhouette, color mood, and brand affinity.

Questions are parsed from <wizard_questions> XML tags. An “Other” option with free-text input is appended automatically by the UI (not generated by the model).

Vector Search and Product Ranking

The recommendation engine combines semantic vector search with LLM-based ranking in a two-stage pipeline:

flowchart TB

subgraph stage1 [Stage 1: Semantic Retrieval]

A[Goal + Answers

Concatenated Query]

B["gemini-embedding-001

1,536-dim Vector"]

C[Upstash Vector

Cosine Similarity Search]

D[150 Candidate Products]

end

subgraph balance [Category Balancing]

E{Outfit Mode?}

F["Balance across categories

shoes, tops, bottoms, layers, accessories"]

G["Store diversity cap

max topK/5 per store"]

end

subgraph stage2 [Stage 2: LLM Ranking]

H["gemini-3-flash-preview

+ Biometric Profile

+ Customer Photo

+ All Answers"]

I["Ranked Product IDs

+ Rationale per Pick"]

end

subgraph hydrate [Hydration]

J["Match IDs to Catalog

Restore images, URLs, prices"]

K[Final Recommendations]

end

A --> B --> C --> D

D --> E

E -->|Yes| F --> H

E -->|No| G --> H

H --> I --> J --> K

Stage 1 embeds the concatenated query (goal + all answers) using gemini-embedding-001 and retrieves 150 candidate products from Upstash Vector via cosine similarity.

For outfit-mode queries, the system fetches 3x the requested topK and then balances results across garment categories. For single-category queries, it enforces store diversity (no more than topK/5 products from any one store) to prevent a single retailer from dominating results.

Stage 2 passes all candidates to Gemini along with the customer’s biometric profile, original photo, and all question answers. The model ranks products, assigns archetypes (in Ten Picks mode) or outfit slots (in Two Fits mode), and provides a brief rationale for each selection. Product IDs in the response must match catalog entries exactly; the system hydrates them with images, URLs, and pricing from the normalized product data.

Embedding Specifications

| Parameter | Value | Notes |

|---|---|---|

| Model | gemini-embedding-001 | Google's general-purpose embedding model |

| Dimensions | 1,536 | High-dimensional for fine-grained similarity |

| Embedding Text | Name + Type + Vendor + Store + Color Tags + Tags (8) + Description (80 chars) | Composite text per product |

| Vector ID Format | {storeName}::{productUrl} | Stable, unique per product across all stores |

| Metadata Fields | name, price, imageUrl, productUrl, storeName, vendor, description, productType, tags, _hash | Full product context stored alongside each vector |

Inventory Synchronization

The inventory pipeline runs as a Vercel Cron Job, scheduled daily at 06:00 UTC via vercel.json:

{

"crons": [

{

"path": "/api/wizard/sync",

"schedule": "0 6 * * *"

}

]

}The sync process:

-

Crawl: Fetches product data from all 52 Shopify stores via their public JSON API (

/products.json?limit=250), paginating through all available products. Each store request has an 8-second timeout; failures are handled viaPromise.allSettled(). -

Normalize: Each raw Shopify product is transformed into a

NormalizedProductrecord (name, price, imageUrl, productUrl, storeName, vendor, description stripped to 200 characters, productType, tags). -

Hash: A content hash (

name|price|description|tags|imageUrl) is computed for each product to detect changes. -

Diff: Existing vectors in Upstash are compared against incoming hashes. Only new or changed products are re-embedded. Deleted products (present in the index but absent from the crawl) are removed.

-

Embed and Upsert: Changed products are embedded in batches of 100 via

gemini-embedding-001and upserted to Upstash Vector. Rate limit responses (HTTP 429) trigger exponential backoff (20s, 40s) with a maximum of 3 retries.

The maxDuration for the sync endpoint is set to 300 seconds (5 minutes), sufficient to crawl and process the full catalog.

Vendor Name Clustering

Raw Shopify data contains inconsistent vendor names. The system resolves this with a multi-stage deduplication pipeline:

| Stage | Method | Example |

|---|---|---|

| 1. Rule-based pre-grouping | Strip diacritics, corporate suffixes ("BV", "LLC"), hyphenated product-line suffixes | "Nike Netherlands BV" and "Nike-Footwear" both map to "Nike" |

| 2. Embedding similarity | Embed each group's display name, cluster by cosine similarity (threshold: 0.82) | "Maison Margiela" and "MM6 Maison Margiela" cluster together |

| 3. Prefix merge | Merge clusters sharing a common prefix (min 8 characters) | "Comme des Garcons Homme" + "Comme des Garcons Play" merge to "Comme des Garcons" |

The resulting vendor map is cached in memory with a 1-hour TTL.

Structured Output via XML Tags

All Gemini responses use custom XML tags for structured data extraction rather than Gemini’s native JSON mode:

| XML Tag | Used By | Contains |

|---|---|---|

| <biometric_analysis> | Photo analysis | JSON: body type, posture, color season, palette |

| <wizard_questions> | Question generation | JSON: array of questions with option arrays |

| <wizard_recommendations> | Product ranking | JSON: ranked products with archetypes/slots and rationale |

| <no_person> | Photo analysis | Empty; signals no human detected in photo |

This design enables mixed-content responses (conversational text alongside structured data) and avoids the rigidity of pure JSON mode, where the model cannot include explanatory prose outside the schema.

Software Stack

| Component | Technology | Purpose |

|---|---|---|

| Framework | Next.js 16.2 / React 19 | Full-stack application with API routes |

| Vision + Reasoning | Gemini 3 Flash Preview | Biometric analysis, question generation, product ranking |

| Embeddings | Gemini Embedding 001 (1,536-dim) | Product vectorization and query embedding |

| Image Generation | Nano Banana Pro Preview | AI-generated outfit visualization |

| Vector Database | Upstash Vector | Semantic product search with metadata storage |

| Inventory Source | 52 Shopify Stores (Public JSON API) | Live product catalog with daily sync |

| Deployment | Vercel (Cron Jobs + Serverless Functions) | Hosting, scheduling, and edge delivery |

| Styling | Tailwind CSS 4 | Utility-first CSS framework |

| State Management | React useReducer (13 action types) | Wizard step progression and data accumulation |

UX Engineering

Perceived Performance

The system makes 3-4 sequential AI calls, each taking 5-30 seconds. Rather than showing a static spinner, the team implemented several strategies to maintain engagement during processing:

Question Prefetching: When the user types their movement goal, a debounced prefetch (800ms) fires to generate follow-up questions in the background. By the time they complete the biometric scan (Steps 2-3), questions are already cached in a module-level Map. All four quick-pill options are prefetched on component mount.

Product Teaser Carousel: During the biometric analysis and question loading states, the UI displays an Instagram Stories-style product carousel pulled from the vector DB. Products rotate with progress bars and swipe animations. This serves double duty: it keeps the user engaged and subtly introduces them to the catalog.

Fashion Video Loading: The final recommendation loading state displays a full-screen video carousel (three fashion-themed loops) with animated progress steps (“Scanning partner inventories”, “Cross-referencing your body profile”, “Ranking by fit confidence”). The videos are pre-loaded in /public/videos/.

Animated Scan Overlay: During photo analysis, the UI renders a cosmetic body-scan animation (scan lines, grid overlay, corner brackets, body silhouette guide) that progresses through five labeled stages (“Detecting body landmarks”, “Mapping proportions”, etc.). This is purely visual; the actual analysis is a single Gemini vision call.

Share Card Generation

The results view includes a shareable image generated entirely client-side using the Canvas 2D API. The canvas renders at 1080px width and composites product images, the customer’s color palette, pricing, and branding into a single PNG. Distribution uses the Web Share API (navigator.share() with file support) or falls back to a direct download.

Store Coverage

The platform indexes products from 52 Shopify storefronts spanning the specialty retail ecosystem:

| Category | Stores | Examples |

|---|---|---|

| Athletic / Running | 6 | Allbirds, NOBULL, Outdoor Voices, Satisfy, Janji, 2XU |

| Gym / Training | 4 | LSKD, Ryderwear, Alphalete, Hylete |

| Women's Activewear | 2 | SET Active, Girlfriend Collective |

| Sneakers / Streetwear | 6 | Undefeated, NRML, Palace, Stussy, BAPE, Feature |

| Designer / Luxury | 10+ | Rick Owens, Maison Margiela, Fear of God, Sacai, KidSuper |

| Multi-Brand Retailers | 8+ | DTLR, Social Status, Packer Shoes, Shoe Palace, gravitypope |

| Outdoor / Yoga | 3 | Cotopaxi, YogaOutlet, Manduka |

| Essentials / Basics | 4+ | Marine Layer, Sunspel, Ten Thousand, Carbon38 |

Each store is crawled via the Shopify public product JSON API (/products.json?limit=250) with pagination. Product records are normalized into a consistent schema before embedding.

API Route Summary

| Route | Method | Max Duration | Purpose |

|---|---|---|---|

/api/wizard/analyze |

POST | 30s | Biometric photo analysis via Gemini vision |

/api/wizard/questions |

POST | 60s | Adaptive follow-up question generation |

/api/wizard/recommend |

POST | 60s | Vector search + LLM ranking |

/api/wizard/outfit-image |

POST | 60s | AI outfit image generation (on demand) |

/api/wizard/sync |

POST | 300s | Daily Shopify inventory sync to vector DB |

/api/wizard/teasers |

GET | default | Random products for loading-state carousels |

/api/wizard/warmup |

GET/POST | default | Vector index readiness check |

/api/brands/vendors |

GET | default | Deduplicated brand directory (cached 1hr) |

/api/brands/products |

GET | default | Products by vendor name |

/api/brands/pairs |

POST | default | Complementary product pairing |

/api/brands/build-map |

POST | 120s | Rebuild vendor embedding clusters |

/api/collect-email |

POST | default | Lead capture via Google Sheets webhook |

Brand routes use cache headers (s-maxage=3600, stale-while-revalidate=7200) for CDN-level caching. The teaser route maintains a 10-minute in-memory cache.

Estimated Cost Analysis

Per-Session AI Costs

Each customer session makes 3 required Gemini calls, with an optional 4th for outfit visualization:

Gemini API Cost Per Session (Estimated)

| Call | Model | Est. Input Tokens | Est. Output Tokens | Est. Cost |

|---|---|---|---|---|

| Biometric Analysis | gemini-3-flash-preview | ~2,000 (text) + image | ~800 | ~$0.002 |

| Question Generation | gemini-3-flash-preview | ~1,500 | ~600 | ~$0.001 |

| Product Ranking | gemini-3-flash-preview | ~8,000 (150 products + profile) | ~2,000 | ~$0.005 |

| Query Embedding | gemini-embedding-001 | ~200 | N/A | ~$0.0001 |

| Outfit Image (optional) | nano-banana-pro-preview | ~500 + image | 1 image | ~$0.01 |

| Total (without image gen) | ~$0.008 | |||

| Total (with image gen) | ~$0.018 |

Infrastructure Costs

Monthly Operating Costs (Estimated)

| Service | Usage | Monthly Cost |

|---|---|---|

| Vercel Pro | Hosting, cron jobs, serverless functions | $20 |

| Upstash Vector | ~10K+ vectors, daily sync queries + user queries | $25-50 |

| Gemini API (1,000 sessions/mo) | 3-4 calls per session | $8-18 |

| Gemini Embeddings (sync) | Daily re-embedding of changed products | ~$1-5 |

| Total (1,000 sessions/mo) | $54-93 |

Comparison: AI Advisor vs. Human Associate

Annual Cost Comparison (1,000 sessions/month)

| Cost Category | Human Associate | AuraFits Platform |

|---|---|---|

| Salary / Platform Costs | $45,000-65,000 | $648-1,116 |

| Training & Onboarding | $2,000-5,000 | $0 |

| Availability | ~40 hrs/week | 24/7 |

| Product Knowledge | 1-3 brand specialties | 52 stores, full catalog |

| Sessions per Day | 15-25 | Unlimited concurrent |

| Consistency | Varies by associate, shift, mood | Deterministic pipeline |

The platform is not a replacement for human associates. It is a force multiplier: every associate gets the product memory of the entire catalog and the body-analysis capability that previously required specialized training.

Key Design Decisions

-

Gemini-only AI stack: A single provider (Google) handles vision, reasoning, embeddings, and image generation. This eliminates cross-provider API key management, billing fragmentation, and latency from routing between services. The tradeoff is vendor lock-in to Google’s model ecosystem.

-

XML-tagged structured output over JSON mode: Gemini’s responses embed structured data in custom XML tags (

<biometric_analysis>,<wizard_questions>, etc.) rather than using native JSON mode. This allows mixed-content responses where conversational prose and structured data coexist, and avoids the rigidity of pure schema-constrained output. -

Live Shopify scraping over static catalogs: Products are fetched daily from 52 Shopify stores via their public JSON API, not maintained in a static database. This means recommendations always reflect current inventory, but introduces a dependency on third-party store availability and API stability.

-

Client-side image downsampling: Photos are resized to max 1024px at 70% JPEG quality before leaving the browser. This reduces upload size and API token costs substantially while preserving sufficient detail for body-type estimation.

-

No server-side session persistence: Wizard state lives entirely in React’s

useReducer. A page refresh loses all progress. This is a deliberate simplicity tradeoff: no database, no session store, no authentication. -

Embedding-based vendor deduplication: Rather than maintaining a manual brand-name mapping table, the system embeds vendor names and clusters by cosine similarity (threshold 0.82) with rule-based pre-grouping. This adapts automatically as new stores are added.

Key Takeaways

-

Multimodal AI enables a new class of retail experience. Combining vision (body analysis), language (adaptive conversation), and search (vector retrieval) in a single flow produces recommendations that no single capability could achieve alone.

-

Live inventory beats static catalogs. Daily synchronization from 52 Shopify stores ensures every recommendation is something the customer can actually buy. The incremental sync with content hashing keeps embedding costs proportional to change volume, not catalog size.

-

Perceived performance is a design problem, not just an engineering one. Three sequential AI calls totaling 30-60 seconds would be intolerable with static spinners. Product teaser carousels, fashion videos, and animated scan overlays transform wait time into engagement.

-

Embedding-based deduplication scales better than manual mappings. The vendor clustering pipeline (rule-based pre-grouping, cosine similarity at 0.82, prefix merge) handles the messy reality of multi-store data without a manually curated brand dictionary.

-

The infrastructure footprint is remarkably small. The entire platform runs on Vercel serverless functions, one Upstash Vector index, and the Gemini API. No databases, no GPU instances, no container orchestration. Monthly infrastructure costs for 1,000 sessions are under $100.

References

[1] U.S. Bureau of Labor Statistics. "Retail Sales Workers: Occupational Outlook Handbook." BLS, 2024.

[2] Google. "Gemini API Documentation." Google AI for Developers, 2025.

[3] Upstash. "Upstash Vector: Serverless Vector Database." Upstash Documentation, 2025.

[4] Shopify. "Shopify Admin REST API Reference." Shopify Dev, 2025.

[5] Reimers, N., and Gurevych, I. "Sentence-BERT: Sentence Embeddings using Siamese BERT-Networks." EMNLP, 2019.

[6] Johnson, J., Douze, M., and Jégou, H. "Billion-scale Similarity Search with GPUs." IEEE Transactions on Big Data, 2019.

[7] Lewis, P., et al. "Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks." NeurIPS, 2020.

[8] Jia, C., et al. "Scaling Up Visual and Vision-Language Representation Learning With Noisy Text Supervision." ICML, 2021.

[9] Karpukhin, V., et al. "Dense Passage Retrieval for Open-Domain Question Answering." EMNLP, 2020.

[10] National Retail Federation. "State of Retail Technology Report." NRF, 2024.